Last year, Chrome announced that it was making a change to default cookies to SameSite:Lax if there is no SameSite setting explicitly set. I wrote about this change last year (https://www.jardinesoftware.net/2019/10/28/samesite-by-default-in-2020/). This change could have an impact on some sites, so it is important that you test this out. The changes are supposed to start rolling out in February (this month). The linked post shows how to force these defaults in both FireFox and Chrome. In … [Read more...] about Chrome is making some changes.. are you ready?

developer

Thinking about starting a bug bounty? Do this first.

Application security has become an important topic within our organizations. We have come to understand that the data that we deem sensitive and critical to our business is made available through these applications. With breaches happening all the time, it is critical to take reasonable steps to help protect that data by ensuring that our applications are implementing strong controls. Over the years, testing has been the main avenue for "implementing" security into applications. We have seen a … [Read more...] about Thinking about starting a bug bounty? Do this first.

Overview of Web Security Policies

A vulnerability was just identified in your website. How would you know? The process of vulnerability disclosure to an organization is often very difficult to identify. Whether you are offering any type of bounty for security bugs or not, it is important that there is a clear path for someone to notify you of a potential concern. Unfortunately, the process is different on every application and it can be very difficult to find it. For someone that is just trying to help out, it can be very … [Read more...] about Overview of Web Security Policies

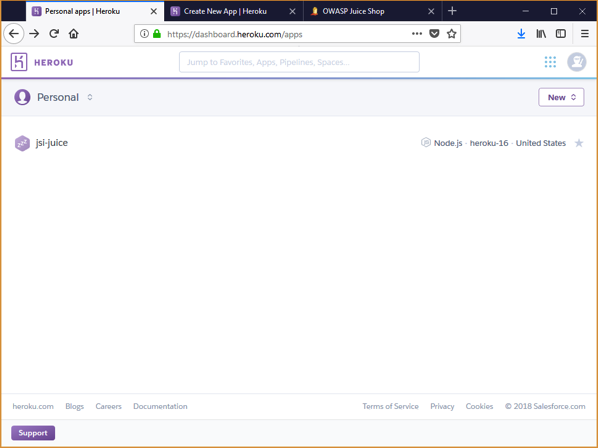

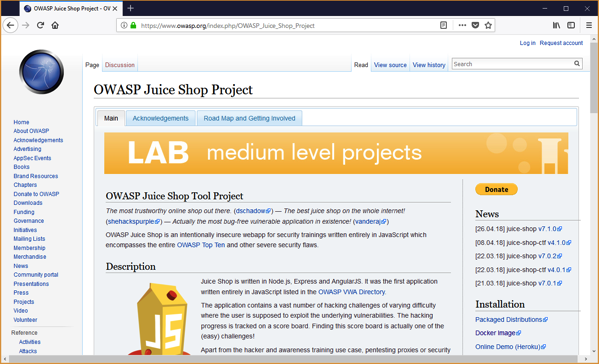

Installing OWASP JuiceShop with Heroku

I am often asked the question by clients and students where people can go to learn hacking techniques for application security. For years, we have had many purposely vulnerable applications available to us. These applications provide a safe environment for us to learn more about hacking applications and the vulnerabilities that are exposed without the legal ramifications. In this post I want to show you how simple it is to install the OWASP Juice Shop application using Heroku. Juice Shop is a … [Read more...] about Installing OWASP JuiceShop with Heroku

Installing OWASP JuiceShop with Docker

I am often asked the question by clients and students where people can go to learn hacking techniques for application security. For years, we have had many purposely vulnerable applications available to us. These applications provide a safe environment for us to learn more about hacking applications and the vulnerabilities that are exposed without the legal ramifications. In this post I want to show you how simple it is to install the OWASP Juice Shop application using a Docker container. … [Read more...] about Installing OWASP JuiceShop with Docker

Understanding Your Application Platform

Building applications today includes the use of some pretty impressive platforms. These platforms have so much built in capability, many of the most common tasks are easily accomplished through simple method calls. As developers, we rely on these frameworks to provide a certain level of functionality. Much of which we may never even use. When it comes to security, the platform can be a love/hate relationship. On the one hand, developers may have little control over how the platform handles … [Read more...] about Understanding Your Application Platform