It is possible to put a custom login page up for the Serv-U login screen. When this happens, the page is most likely not displaying the version number. One way that may help identify the version is to visit the Mobile login page at /Web Client/Mobile/MLogin.htm. Why is this important? When performing external security scans with tools like Nessus, it may report that the version of Serv-U is incorrect. Finding the version number is important in identifying potential false positives. … [Read more...] about How Can I Find The Version of Serv-U FTP on Custom Branded Login?

pen testing

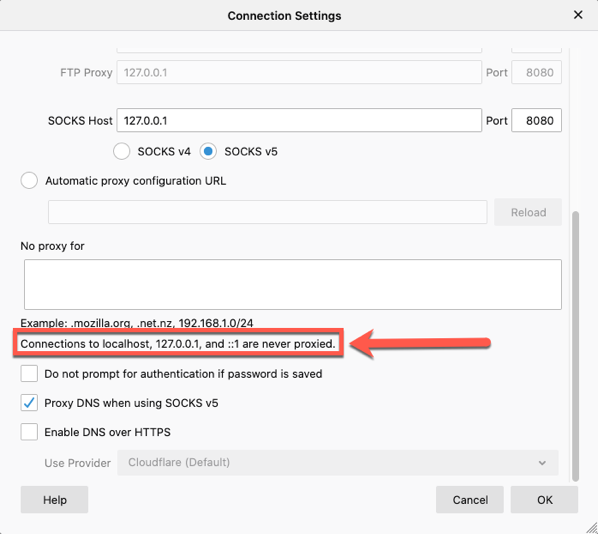

Proxying localhost on FireFox

When you think of application security testing, one of the most common tools is a web proxy. Whether it is Burp Suite from Portswigger, ZAP from OWASP, Fiddler, or Charles Proxy, a proxy is heavily used. From time to time, you may find yourself testing a locally running application. Outside of some test labs or local development, this isn't really that common. But if you do find yourself testing a site on localhost, you may run into a roadblock in your browser. If you are using a recent version … [Read more...] about Proxying localhost on FireFox

Ep. 115: Is CSRF Really Dead?

In 2020, Chrome will default the SameSite attribute to Lax on all cookies. SameSite helps mitigate CSRF, but does that mean CSRF is Dead? For more info go to https://www.developsec.com or follow us on twitter (@developsec). Join the conversations.. join our slack channel. Email james@developsec.com for an invitation. DevelopSec provides application security training to add value to your application security program. Contact us today to see how we can help. … [Read more...] about Ep. 115: Is CSRF Really Dead?

XSS in Script Tag

Cross-site scripting is a pretty common vulnerability, even with many of the new advances in UI frameworks. One of the first things we mention when discussing the vulnerability is to understand the context. Is it HTML, Attribute, JavaScript, etc.? This understanding helps us better understand the types of characters that can be used to expose the vulnerability. In this post, I want to take a quick look at placing data within a <script> tag. In particular, I want to look at how embedded … [Read more...] about XSS in Script Tag

What is the difference between Brute Force and Credential Stuffing?

Many people get confused between brute force attacks and credentials stuffing. To help clear this up, here is a simple description of the two. These are both in regards to the login form only. Brute Force Brute force attacks on the login form consist of the attacker having a defined list (called a dictionary) of potential passwords. The attacker will then try each of these defined passwords with each username the attacker is trying to brute force. Put simply, this is a 1 (username) too many … [Read more...] about What is the difference between Brute Force and Credential Stuffing?

Thinking about starting a bug bounty? Do this first.

Application security has become an important topic within our organizations. We have come to understand that the data that we deem sensitive and critical to our business is made available through these applications. With breaches happening all the time, it is critical to take reasonable steps to help protect that data by ensuring that our applications are implementing strong controls. Over the years, testing has been the main avenue for "implementing" security into applications. We have seen a … [Read more...] about Thinking about starting a bug bounty? Do this first.