Looking through Twitter recently, I caught a very interesting stream that started with the following message: What's the deal with the enumeration exclusions on all the @bugcrowd bounties. Clients just don't want to fix?— Stephen Haywood (@averagesecguy) July 26, 2016 There were quite a few replies, and a good discussion on the topic of the seriousness of username enumeration flaws. 140 characters is difficult to share a lot of thoughts, so I thought this would actually be … [Read more...] about How Serious is Username Enumeration

penetration testing

Understanding the “Why”

If I told you to adjust your seat before adjusting your mirror in your car, would you just do it? Just because I said so, or do you understand why there is a specific order? Most of us retain concepts better when we can understand them logically. Developing applications requires a lot of moving pieces. An important piece in that process is implementing security controls to help protect the application, the company, and the users. In many organizations, security is heavily guided by an … [Read more...] about Understanding the “Why”

Introduction to Penetration Testing for Application Teams

In this presentation, James Jardine focuses on educating application teams on what a penetration test is and how to extract the most value from it. Application teams learn how to participate in the engagement and better understand the report. You can watch the recorded session at any time at: https://youtu.be/I1PukF8Glh0 https://youtu.be/I1PukF8Glh0 … [Read more...] about Introduction to Penetration Testing for Application Teams

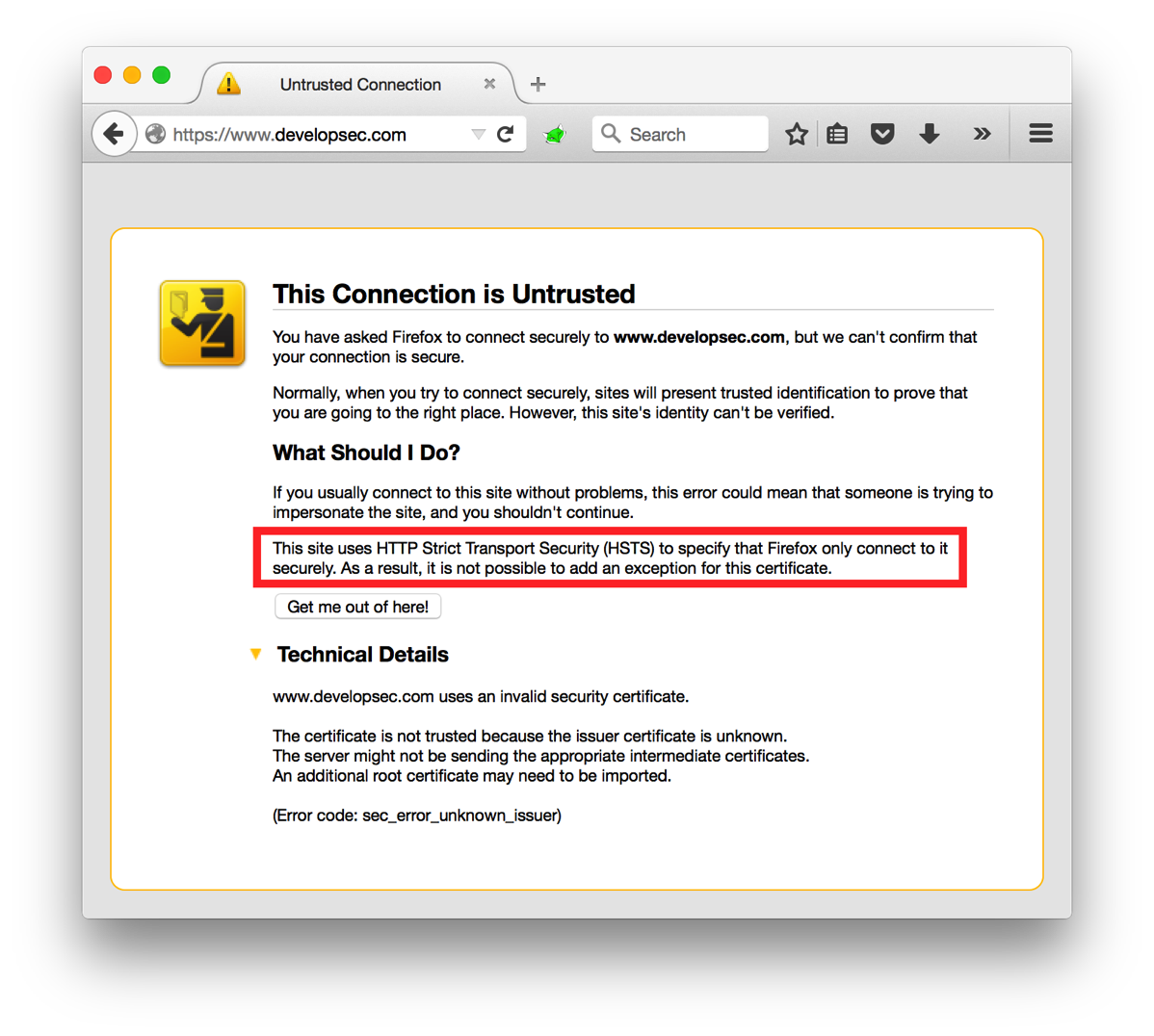

HTTP Strict Transport Security (HSTS): Overview

A while back I asked the question "Is HTTP being left behind for HTTPS?". If you are looking to make the move to an HTTPS only web space one of the settings you can configure is HTTP Strict Transport Security, or HSTS. The idea behind HSTS is that it will tell the browser to only communicate with the web site over a secure channel. Even if the user attempts to switch to HTTP, the browser will make the change before it even sends the request. HSTS is implemented as a response header with a … [Read more...] about HTTP Strict Transport Security (HSTS): Overview

Tips for Securing Test Servers/Devices on a Network

How many times have you wanted to see how something worked, or it looked really cool, so you stood up an instance on your network? You are trying out Jenkins, or you stood up a new Tomcat server for some internal testing. Do you practice good security procedures on these systems? Do you set strong passwords? Do you apply updates? These devices or applications are often overlooked by the person that stood them up, and probably unknown to the security team. It may seem as though these systems … [Read more...] about Tips for Securing Test Servers/Devices on a Network